In February 2024, Klarna published one of the most-cited AI support benchmarks in the industry: 2.3 million conversations in a month, two-thirds of all chats handled end-to-end, resolution time cut from 11 minutes to under 2. By May 2025, the CEO was publicly hiring human agents back and saying the automation push "went too far" and "compromised service quality."

Most posts covering this story stop there — at the arc. This one doesn't. We use Klarna's three-beat sequence — initial win → quality erosion → hybrid reset — as a blueprint engineering exercise. That means pulling apart why the erosion happened (cost became the only optimisation signal), and translating the lessons into concrete tooling: a three-tier intent model, a routing decision tree you can ship next sprint, escalation handoff mechanics that stop customers from repeating themselves, and a QA scorecard with stop-loss thresholds you set before you scale.

Because "bots bad / humans good" is not a system design. This is.

Key Takeaways

- Klarna's AI assistant scaled the boring-but-necessary support work fast: 2.3M conversations in a month, handling two-thirds of chats, with a reported resolution time dropping to <2 minutes vs 11 minutes previously (Klarna press release, 2024).

- The later reset wasn't framed as "AI failed." It was framed as cost and deflection becoming the main organising principle—and Klarna's CEO said that tilt showed up as "lower quality" (Maginative, 2025).

- The practical lesson: hybrid design is an operating system, not a one-time channel pick. In practice, that means clear ticket tiering, fast escalation, context-rich handoffs, and metrics that punish "bad containment" (when the bot ends the chat without actually solving the issue) (eesel.ai, Decagon glossary).

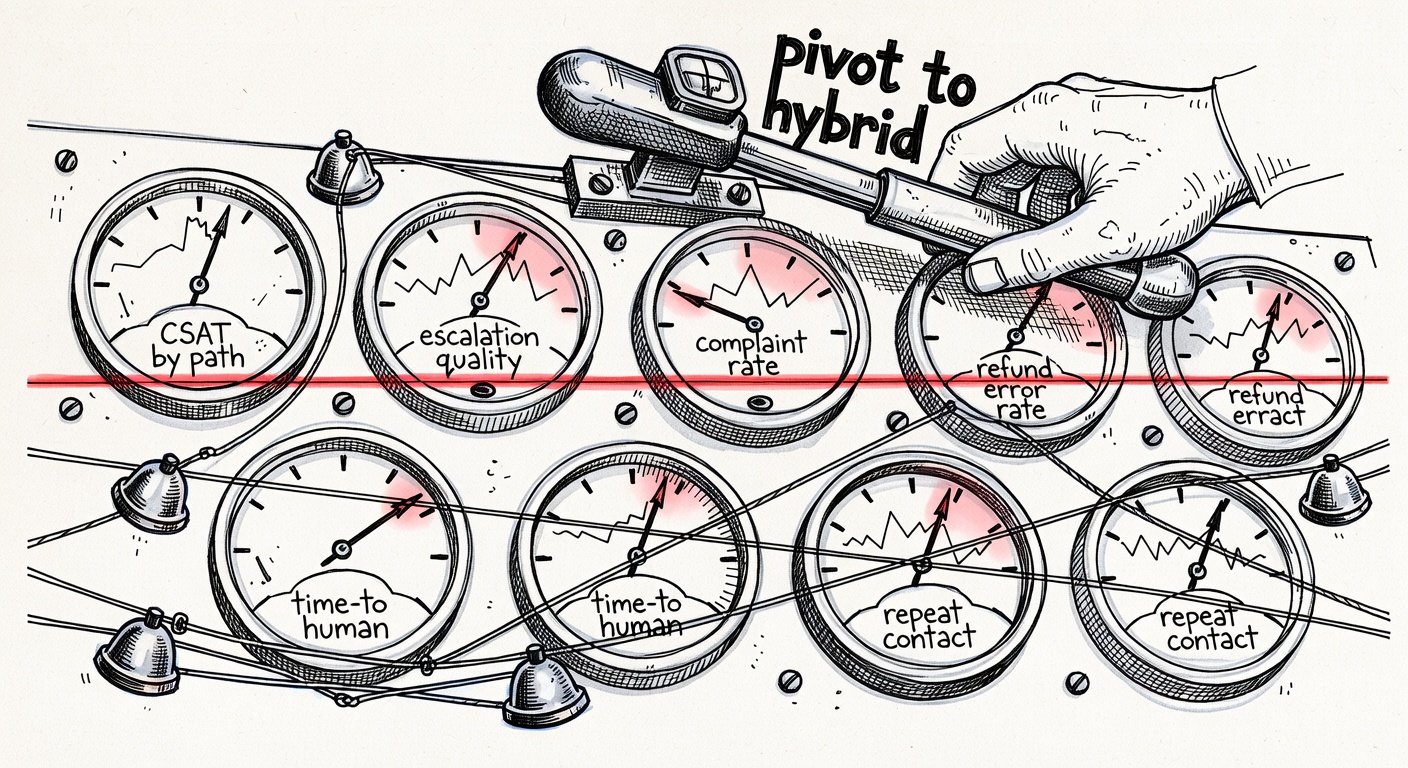

- If you optimise only for cost per contact, you can quietly grind down trust and lifetime value. Build stop-loss thresholds (CSAT by path, recontact rate, complaint rate, refund error rate), and be ready to change course early.

Skip straight to implementation?

Atiendia builds hybrid AI assistants trained on your full document corpus and connected to your live systems — orders, CRM, APIs — with human escalation built in from day one. If the blueprint below is what you're after, try the live demo or book a 30-minute call.

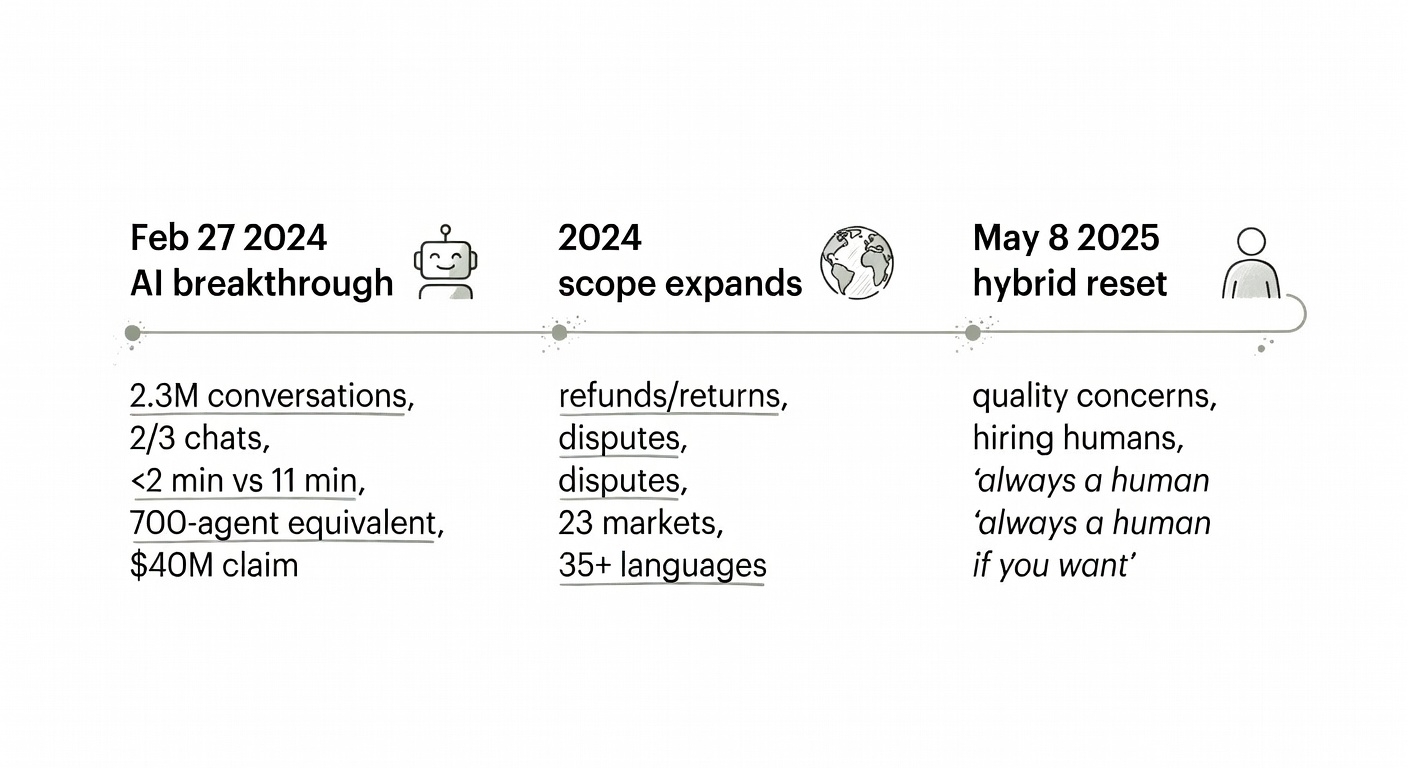

Timeline: From 'AI breakthrough' to a hybrid reset (2024 → 2025)

A lot of the chatter around this story gets treated like a grab bag of disconnected headlines. Put it on a timeline, and the through-line becomes a lot easier to spot.

- Feb 27, 2024 — public "AI breakthrough" moment: Klarna announced an AI assistant "powered by OpenAI" and shared first-month performance claims: 2.3M conversations, two-thirds of chats, "equivalent work of 700 full-time agents", "on par" CSAT, and <2 minutes vs 11 minutes to resolve errands (Klarna press release, 2024).

- 2024 — scope expansion implied: The assistant was positioned as broadly capable: refunds/returns, payment issues, cancellations, disputes, invoice inaccuracies, plus multilingual support across 23 markets and 35+ languages.

- May 8, 2025 — quality-driven recalibration reported: Klarna was reportedly "actively recruiting human customer service agents" after the CEO said the automation push "went too far" and quality took a hit; he stressed customers need a clear path to a person: "there will be always a human if you want" (Maginative, 2025).

Laid out like this, the contrast is hard to miss. Imagine a customer asking, "Where's my refund?" If it's already approved and the status is sitting cleanly in the system, an AI bot can return the answer in seconds. But if it's tangled up in a policy exception, a partial shipment, or a dispute workflow, a lot of customers won't be satisfied with "a helpful explanation"—they'll want a human. And they'll want one now.

After that, the more interesting question isn't "should Klarna have used AI?" It's: what did the system optimise for, and what did it quietly make difficult?

What Klarna claimed worked (and how to interpret the numbers responsibly)

Klarna's press release is unusually dense with metrics, which — credit where it's due — is admirable. But only if we read it the way you read an on-call dashboard at 2 a.m.: curious, a little suspicious, and definitely not grading it like a glossy marketing deck.

Here are the headline claims:

- 2.3 million conversations in the first month (Klarna)

- Two-thirds of customer service chats handled by the assistant

- "Equivalent work" of 700 full-time agents

- CSAT "on par with human agents"

- 25% drop in repeat inquiries

- Resolution time: <2 minutes vs 11 minutes previously

- Coverage: 23 markets, 24/7, 35+ languages

- Financial estimate: $40M profit improvement in 2024

So what's the responsible interpretation?

-

"Handled" isn't the same thing as "resolved." In a lot of orgs (especially when leadership wants a win), "the AI handled it" quietly morphs into "the AI contained it." But containment only counts if the customer's problem is actually fixed — not just politely shooed away without escalation (Decagon, eesel.ai).

-

Speedups can be legit and still accumulate quality debt. Dropping from 11 minutes to under 2 minutes is a jaw-dropping improvement on paper (Klarna). But if "speed" mostly means "we found a faster way to close the ticket," while the gnarly edge cases get glossed over, the pain tends to boomerang later — recontacts, chargebacks, or complaints that reappear in email, phone, or social.

-

The "700 agents" equivalence is directional. Treat it as a rough signal about volume and deflection, not clean accounting. It doesn't tell us which issue categories got automated, what the failure modes looked like, or how often humans had to do the unglamorous cleanup downstream (the part nobody puts in a press release).

And to be fair, Klarna did tuck in an early hybrid tell: "customers can still choose to interact with live agents if they'd prefer" (Klarna). That line matters a lot more in hindsight than it probably did in 2024.

Why Klarna reportedly dialled back: quality, brand trust, and the 'human-out' requirement

The 2025 reporting reads less like a dramatic "AI is over" headline and more like a pretty classic quality-and-brand recalibration.

Maginative reports that Klarna began pulling human agents back into the loop, with CEO Sebastian Siemiatkowski saying the automation push "went too far" and "compromised service quality" (Maginative). Two quotes feel especially operational:

- "As cost unfortunately seems to have been a too predominant evaluation factor… what you end up having is lower quality" (Maginative)

- "There will be always a human if you want" (Maginative)

The "human-out" requirement is where hybrid support either stands up in the real world — or quietly starts cracking. Bury escalation and customers feel trapped; make it obvious and fast, and the AI can function like a decent front door instead of a locked gate (Stonly).

A very real failure mode is billing disputes and fraud anxiety. When someone thinks they've been charged incorrectly — or they suspect an account takeover — they're not just asking for information. They're asking for judgment, reassurance, and sometimes a bit of discretion. Script-y responses (even when technically correct) can come off as dismissive. That's usually when CSAT starts to droop.

If you've watched a few automation rollouts from the inside, the usual suspects behind declining satisfaction tend to look like:

- Issue complexity drift: bots start on FAQs, then get nudged into disputes/refunds where policies have edge cases.

- Escalation friction: customers have to repeat themselves, or the handoff loses context — classic "bad containment" behaviour (eesel.ai).

- Tone/brand voice mismatch: answers that are "right," but still feel cold — especially when money stress is part of the story.

- Hallucinations or overconfidence: even rare wrong claims can poison trust; it's a big reason human-in-the-loop keeps getting recommended for higher-stakes interactions (Salesforce).

If you're building your own hybrid support setup, the takeaway isn't "don't automate." It's: don't automate without a clearly defined quality backstop.

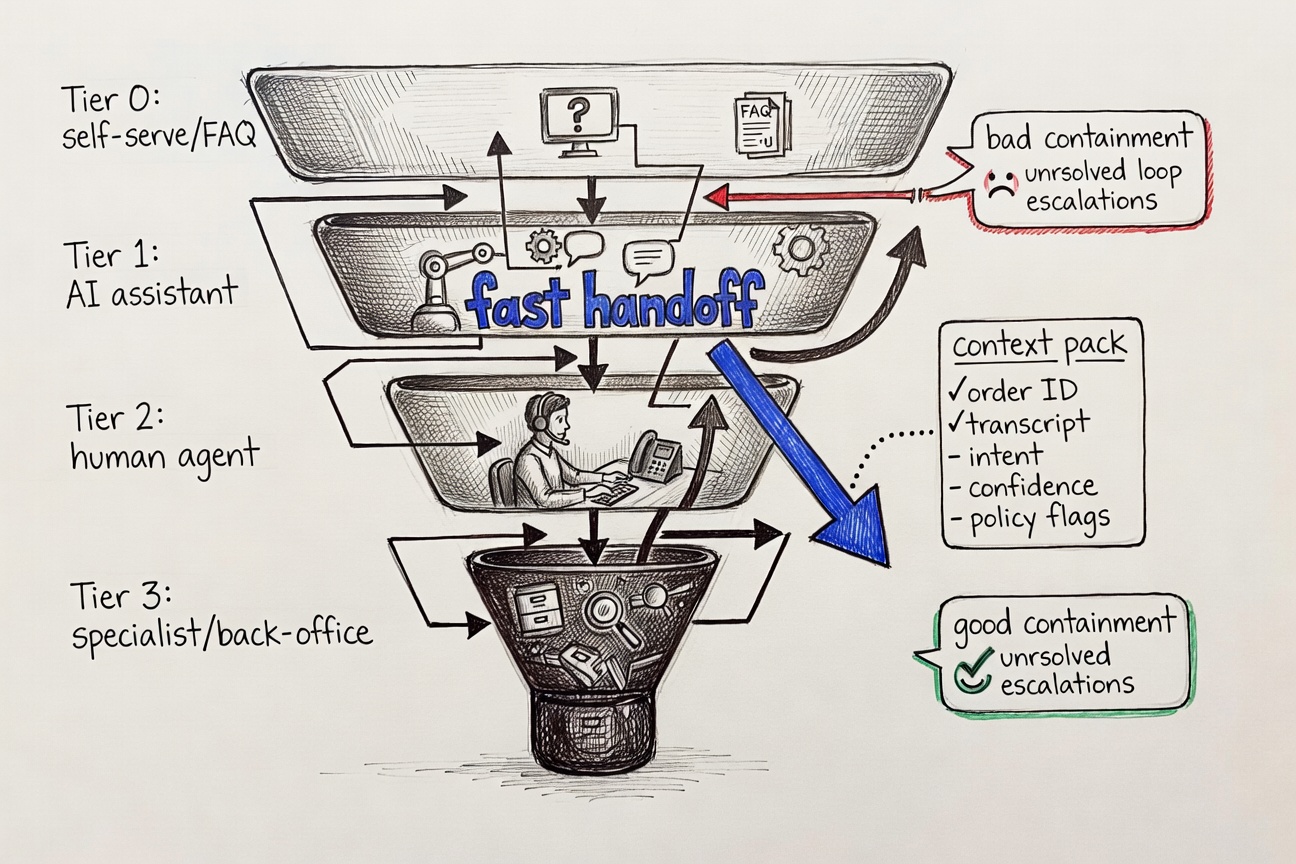

Designing the hybrid support model: roles for AI, roles for humans, and handoff mechanics

Most teams eventually converge on the same not-so-glamorous truth about "hybrid" support: it only works when each side has a clearly scoped job. Let AI take the fast, repeatable, low-risk work; let humans handle judgment, empathy, and the oddball edge cases that never fit neatly into a flowchart (TeamSupport).

Here's the operating blueprint that teams tend to settle into — usually after a few rough weeks and "why is the bot doing that?" postmortems:

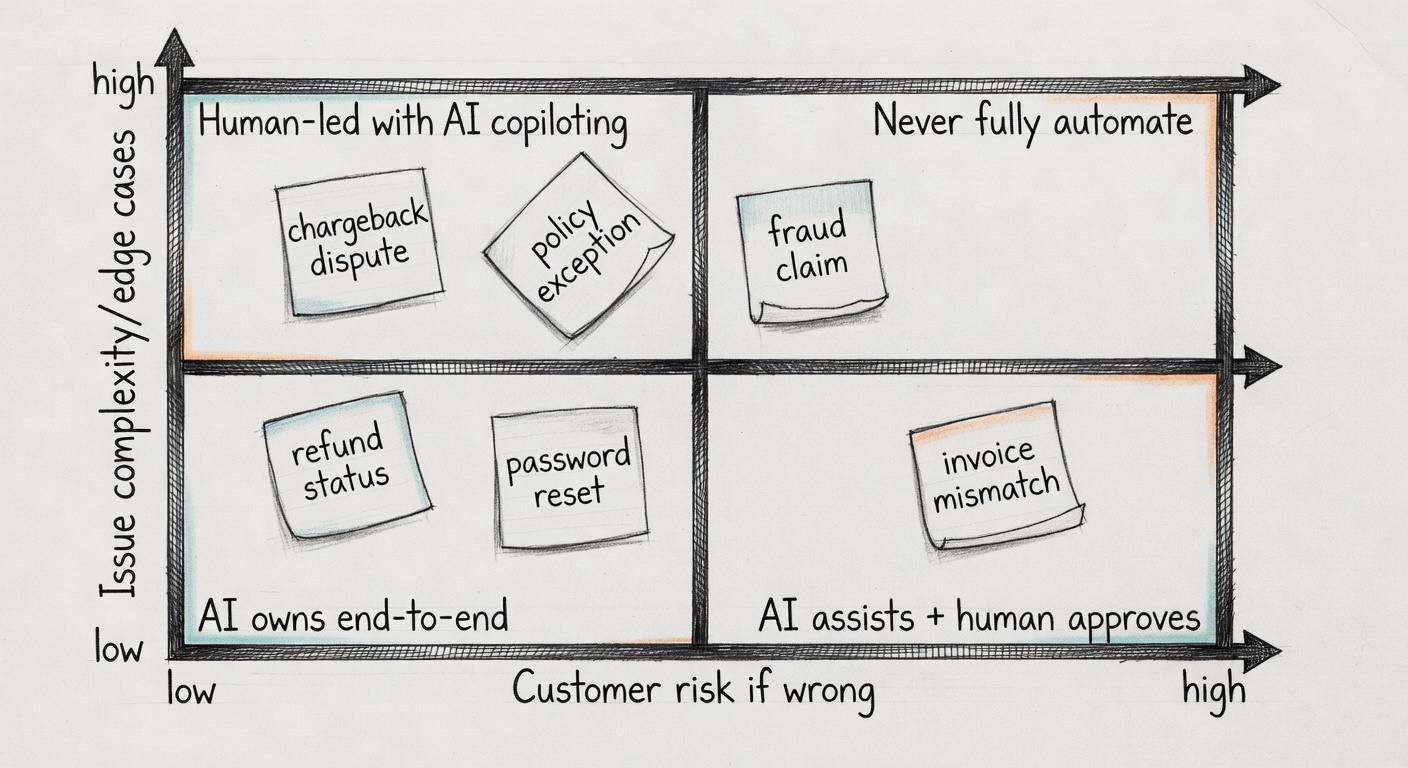

1) Tier your intents by risk, not just volume

- Tier A (low risk, high volume): order/payment status, basic FAQs, simple eligibility checks. AI can typically close these end-to-end.

- Tier B (medium risk): refunds/returns with exceptions, address changes, account access troubleshooting. AI should help and propose next steps, but humans take over when confidence dips.

- Tier C (high risk / high emotion): disputes, fraud, chargebacks, regulatory complaints, vulnerable customers. Default to a human, with AI summarising and assembling the relevant context.

TeamSupport describes the same split in slightly different words: AI for the routine stuff; humans for the complex, emotionally charged situations (TeamSupport).

2) Route with explicit triggers

Routing should be driven by signals you can actually observe and log — not vibes:

- Low confidence in intent or answer → escalate

- Negative sentiment / repeated rephrasing → escalate

- High-value customer tier → escalate sooner

- Policy exception detected (edge-case keywords, tool errors) → escalate

Also: keep a visible "talk to a human" option at all times. Klarna explicitly said customers could still choose live agents in 2024 (Klarna). That's not a throwaway UX checkbox; it's part of the deal you're implicitly making with customers.

3) Build humans-in-the-loop where it matters

Humans-in-the-loop (HitL) gets marketed as the safety net for hallucinations, nuance, and brand judgment (Salesforce). In support, it usually translates to:

- AI drafts + human sends for Tier B/C

- Human QA sampling for Tier A

- Human approval for refunds above a threshold or any dispute outcome

So no, hybrid isn't "50/50." It's policy-driven.

A simple decision tree: contain, assist, or escalate

Here's a decision tree you can plausibly ship next sprint without turning your backlog into a bonfire:

-

AI resolves end-to-end (Contain)

If: Tier A intent + high confidence + tool/API success + no negative sentiment. -

AI collects info + drafts for agent (Assist)

If: Tier B intent or confidence is medium or customer is high value. -

Immediate escalation (Never touch / Escalate)

If: Tier C intent, fraud/dispute signals, legal language, or customer explicitly asks for a human.

This matches the "hybrid balance" most vendors pitch, but it keeps the logic grounded in ticket economics and risk (TeamSupport).

What 'good handoff' looks like (so customers don't repeat themselves)

Bad handoffs are where "AI savings" quietly turn into churn.

A good handoff should include:

- Full transcript + the customer's most recent stated goal

- Detected intent + confidence score

- Tool calls attempted (e.g., refund lookup) and outcomes

- A one-paragraph summary + a recommended next action for the agent

eesel.ai argues you should measure escalation quality, not just containment, because high containment can hide frustration and channel switching (eesel.ai). Track containment by intent so you can see exactly where bots underperform (refunds often underperform status checks).

If your escalations are clean, most customers won't care that AI picked up first. If they're sloppy, they'll remember the friction.

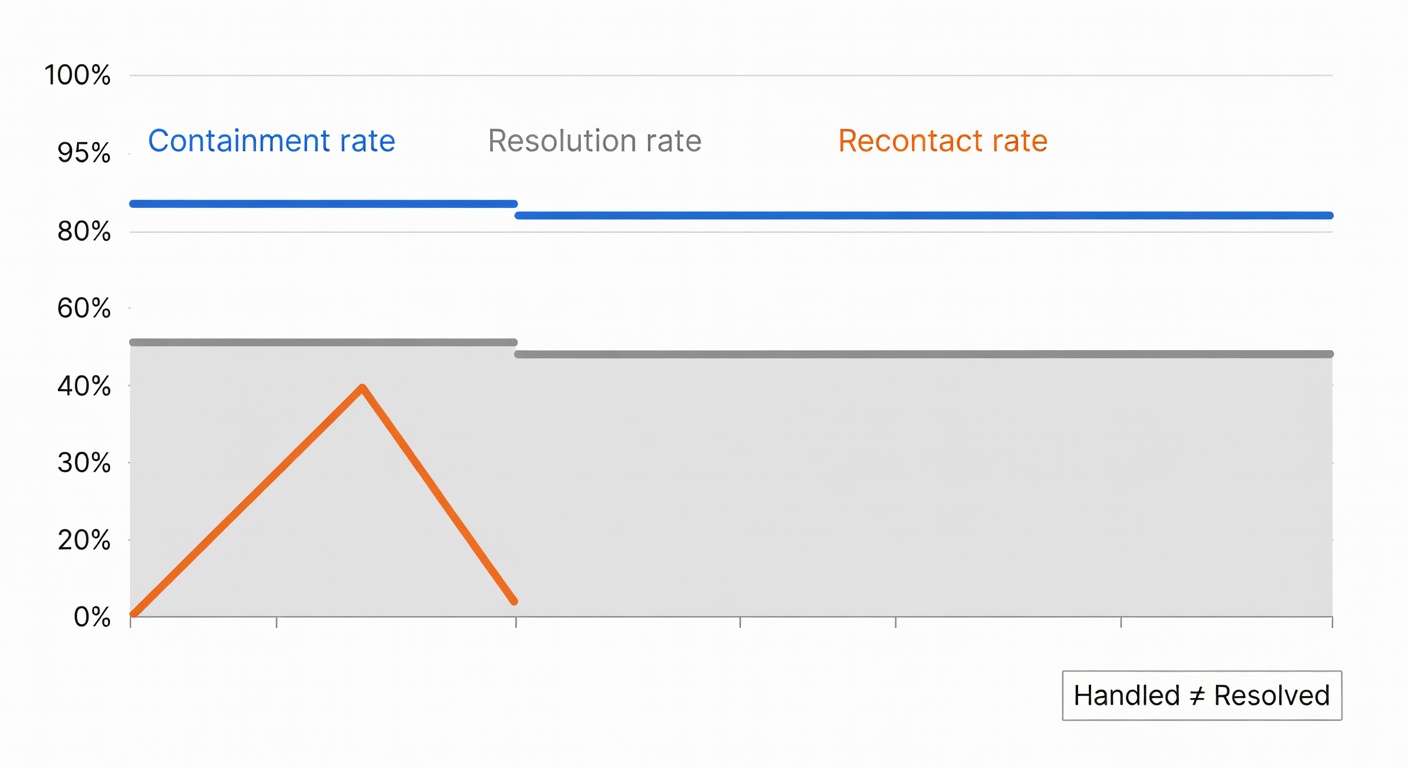

Metrics that matter in a hybrid model (beyond 'containment')

Containment rate is useful — but treat it like a gauge on the dash, not the finish line. In the real world, a small, slightly boring scorecard usually beats a sprawling dashboard that nobody trusts:

- CSAT split by path: AI-only vs escalated vs human-only

- FCR (first contact resolution): across the whole journey (AI + human)

- Recontact rate (24–72h): catches "resolved" conversations that weren't

- Complaint rate / regulator-facing tickets: an early flare for trust problems

- Time-to-resolution (TTR): not just first response time

- Cost per contact: but paired with quality metrics so you don't optimise blindly

- Escalation latency: time from "needs human" to human engaged

- Refund / adjustment error rate: especially if AI can trigger actions

Decagon's definition of containment is refreshingly crisp — % of interactions fully handled by the bot — but it also calls out the obvious catch: you only learn anything if you read it alongside satisfaction (Decagon). eesel.ai takes it a step further: if you chase containment like it's the only number that exists, you can end up with "bad containment," where customers bounce, switch channels, or come back even more irritated than they started (eesel.ai).

One last, very practical guardrail: set your stop-loss thresholds before you scale. Something like, "If AI-only CSAT drops X points week-over-week, or recontact rises Y%, we roll back Tier B intents to agent-assist." Not glamorous. But it's how you avoid the slow, quiet erosion that leadership later has to unwind — often in public.

So — how do you build a hybrid AI assistant that doesn't repeat Klarna's mistakes?

The Klarna story isn't an argument against AI in customer service. It's a blueprint for doing it in the right order: deep knowledge first, tool access second, human escalation always on. Here's what that looks like in practice, and why the sequence matters more than the technology stack.

Step 1: Train the AI on your full document corpus — not just a FAQ sheet

One of the most common failure modes in AI support rollouts is a shallow knowledge base. The assistant knows your top-20 FAQ answers and crumbles the moment a customer asks about edge-case policy, a product variant, or a procedure that only lives in an internal training manual.

Klarna's quality problems weren't random — they were concentrated in the conversations where policy knowledge had to be precise and nuanced (disputes, billing exceptions, fraud). An AI that only ingests a help-centre export will always hit that ceiling.

The deeper the knowledge, the wider the "containment without frustration" zone. An AI assistant trained on your full document corpus — product catalogues, SOPs, pricing rules, legal terms, onboarding docs — can handle far more than one trained only on curated FAQs.

This is how Atiendia is built. Our setup process starts by ingesting all your business documents — policies, product specs, pricing tables, SOPs, CRM exports, operation manuals — and building a knowledge base the assistant reasons against in real time, not just keyword-matches. The result is an assistant that responds like an experienced employee who has read every internal document your company has ever produced, because it has.

Step 2: Connect the AI to your live systems — orders, inventory, databases, APIs

The second half of Klarna's problem is less about knowledge and more about action. An assistant that can answer "what's your refund policy?" is marginally useful. An assistant that can look up the specific order, check its current status, verify what has already been processed, and initiate the next step — that's the one that actually cuts resolution time and keeps CSAT high.

This is where most off-the-shelf chatbot platforms stall. They're stateless: each conversation starts from scratch, with no connection to the operational data that would let the bot actually do something rather than just explain what the customer should do themselves.

Atiendia is designed for this from the ground up. We connect your AI assistant to:

- Your database or CRM — to look up orders, customers, account history, and cases in real time

- Your calendar and scheduling tools — to book appointments and demos automatically

- Your e-commerce platform (Shopify, WooCommerce, Mercado Libre, custom API) — to verify stock, confirm orders, and process returns

- Email and helpdesk workflows — to log conversations, create tickets, and trigger follow-ups without manual entry

- Any external API you use — payment processors, logistics providers, analytics platforms, or internal ERPs

The practical effect: your assistant can resolve a Tier-A or Tier-B inquiry completely, with no human touchpoint, and leave a clean audit trail. That's the "$40M in profit improvement" moment — done sustainably, without the quality erosion Klarna hit when it optimised for deflection instead of resolution.

Step 3: Build human escalation in from day one — clean, fast, and with full context

This is the part most teams add last and regret first. Klarna's CEO quoted it almost verbatim: "there will be always a human if you want." That's not a product feature — it's a trust signal. And it only works if the escalation is seamless, not buried three menus deep.

Atiendia handles this through configurable handover rules designed with the Tier model above in mind:

- Define which intents always escalate to a human from the start — complaints, refund disputes, fraud suspicion, VIP customers, anything with legal or regulatory risk

- Set confidence thresholds — if the assistant's answer score falls below your threshold, it automatically routes to a human agent

- Enable "I want to speak to a person" at any point in any conversation, with zero friction and immediate routing

- Pass the full conversation context — transcript, intent summary, tool calls attempted, outcomes — directly to the human agent, so customers never have to repeat themselves

The target Atiendia designs for: >80% resolution rate without human intervention, measured against real quality signals — CSAT, recontact rate, and resolution accuracy — not just containment numbers. The remaining <20% goes to your human team with everything they need to close the conversation in one touch.

That's the hybrid model Klarna had to reverse-engineer in 2025 — after the public stumble. You can start with it on day one.

Sources

- Klarna press release (2024): AI assistant handled two-thirds of customer service chats in its first month

- Maginative (2025): Klarna dialed back its AI customer service strategy — and began hiring humans again

- eesel.ai (2025): Measuring AI containment rate and escalation quality

- Decagon glossary: What chatbot containment rate means (and how it's used)

- TeamSupport: Hybrid support models — balancing AI with human expertise

- Salesforce (2026): Humans in the loop (HitL) for AI support

- Stonly: Customer support automation and escalation design